Hosts: #Send output to logstash The public ip address of the host. If it has been processed, the registry file needs to be deleted #tail_files: true #Monitor and read the new content from the end of the file instead of re reading and sending from the file. #encoding: utf-8 #The file encoding used to read data containing international characters #multiline.timeout: 10s #Timeout setting timeout will send the matched collected logs #multiline.max_lines: 10000 #Indicates that if the number of lines of multi line information exceeds this number, the excess will be discarded. #multiline.match: after #After matching the pattern, merge it with the following contents into a log

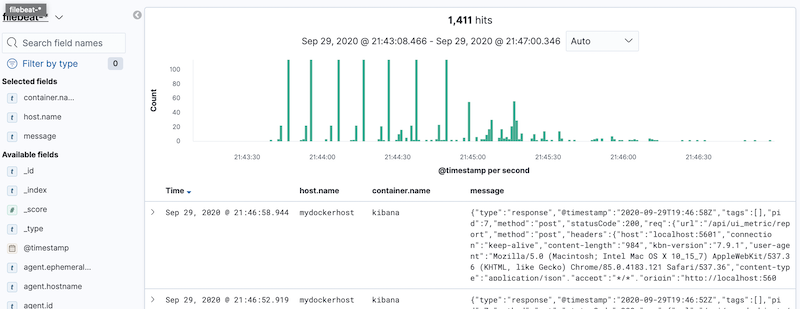

Understanding: if it is set to false, then means that after matching the pattern, it will be merged with the previous content into a log If not, set the transpose to true and the transpose to false. #multiline.negate: true # Whether it is necessary to transpose the pattern condition. Here is the date regular expression, which means that if it starts with yyyy MM DD, this line is the first line of a log and will be aggregated into a log output with the following contents that are not in this format Then create elasticsearch, logstash, kibana, filebeat directories and configuration files related to each directory that need to be mounted in the container: mkdir -p ' #The regular expression is used to match whether it belongs to the same format. After testing, it can be applied to versions 6.8.1 and 7.8.0! (1) Create related directory pathĬreate an elk Directory: sudo mkdir elk & cd elk The installation process of docker compose (stand-alone version) is described in detail below. After that, we will get a ready-made solution for collecting and parsing log messages + a convenient dashboard in Kibana.ELK+Filebeat is mainly used in the log system and mainly includes four components: Elasticsearch, logstack, Kibana and Filebeat, also collectively referred to as Elastic Stack. For example, to collect Nginx log messages, just add a label to its container: co.elastic.logs / module: "nginx"Īnd include hints in the config file. We launch the test application, generate log messages and receive them in the following format: 'įilebeat also has out-of-the-box solutions for collecting and parsing log messages for widely used tools such as Nginx, Postgres, etc. Defining input and output filebeat interfaces: filebeat.inputs:

Creating a volume to store log files outside of containers: docker-compose.yml version: "3.8"

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed